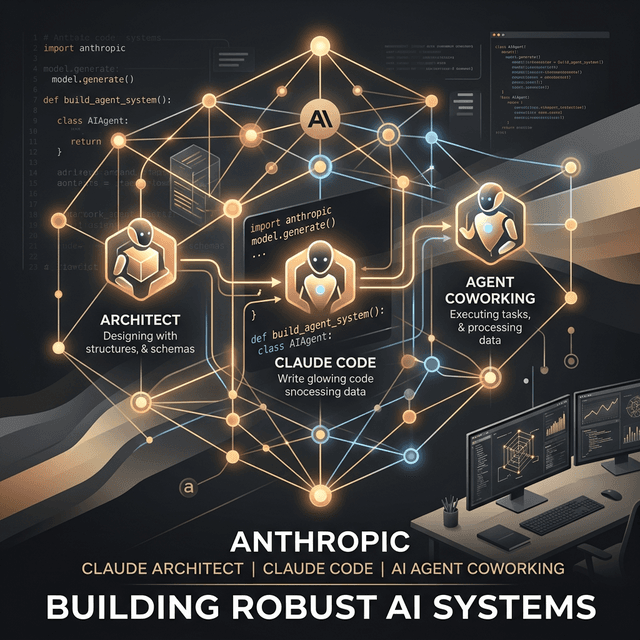

Recently, Anthropic launched the Claude Certified Architect – Foundations certification, structured across four main areas. It's the kind of launch where your first reaction might be: "Ah, just another theoretical course and a badge to slap on LinkedIn." But a highly insightful thread popped up on X from @hooeem that quickly dismantled that idea. The curriculum behind it is actually an incredibly clear map of how we should be building AI agents that actually work. Not just at the level of fragile demos you show off at a conference, but at a production-grade architecture level.

What the thread highlights is very pragmatic. The exam doesn't (just) test abstract theories; it forces you to understand where most developers actually fail when they "play around" with Anthropic MCP (Model Context Protocol), Claude Code, and autonomous agents.

We read through the arguments and translated them into plain English, extracting the core of this "hidden guide." No unnecessary jargon, just the critical steps if you want your AI app to avoid going off the rails at the first exception.

1. Tool Design & MCP: Descriptions are the heart of the agent

The first trap we all fall into is thinking the LLM is smart enough to "intuit" what a program or function in our project does. In Anthropic's curriculum, Tool Design & MCP covers about 18% of the exam. And this is where @hooeem's critical observation comes in:

"Tool descriptions are the most neglected element in any agentic system. The model routes ONLY based on the description. Vague descriptions lead to misrouting."

The model doesn't read the internal logic of your tool. It doesn't have a deep intuition about what that generically named fetchData() function can do. It sees a name, reads your explanatory text, and makes a decision. Wrote an ambiguous or lazy description? Expect the agent to pick the completely wrong tool or refuse to use it altogether.

You don't solve this by inventing a complex routing system, adding some over-engineered classifier, or throwing dozens of few-shot examples at it. The solution is simple and straightforward: you need to write clear descriptions for your tools, with intentions, inputs, and outputs explicitly explained.

Don't give it too many tools at once

Another rule of thumb from the thread: don't hand 18 tools to a single agent. When you give it such a massive possibility space, models get confused in the selection process. The healthy approach? Create specialized sub-agents. One with 3-4 tools for data extraction, another with 3-4 tools for writing. All overseen by an orchestration layer that passes execution to the right sub-agent.

2. The CLAUDE.md Trap: Why your AI fails for the rest of your team

In the Claude Code Configuration section (around 20% of the certification), they touch on a topic many teams treat superficially: the CLAUDE.md file hierarchy.

You have three levels where you can provide instructions, from general to specific:

- User-level: The file is on your local machine (e.g., in your home directory).

- Project-level: In the repository, visible to all components of that solution.

- Directory-level: Specific to a clear context, from folders like

docs/,front-end/down to targeted modules.

Where do the biggest issues arise? You guessed it, with purely local setups. The developer configures the agent perfectly on their own laptop (at the user level). The agent delivers exceptional code daily. But when a colleague pulls the project from git, their assistant is a disaster. Why? Because the critical rules weren't saved in version control. For a predictable workflow, the application's rules absolutely must live in the organization's repository.

3. JSON Schemas guarantee structure, not the truth

The entire industry loves Structured Outputs. It's incredibly satisfying to define a strict JSON Schema, throw it at the model, and get back perfectly formatted, easily parsable data. However, as this curriculum points out:

"tool_use with JSON schemas gets rid of syntax errors, not semantic errors."

In plain terms, the LLM will perfectly format the most outrageous lies. It will incorrectly associate data or draw flawed conclusions, but it will respect your data types like an obedient student. It's beautifully packaged, successfully read into a function, but it contains a mirage. The solution we all need to adopt is the mandatory integration of automated tests and business validations on every valuable output produced by the LLM. The schema guarantees the parse, not the business logic.

4. Don't let the AI invent: Use Nullable fields

It's a subtle but essential detail. Imagine your agent needs to extract a license plate number from a traffic report. If you declare the field in the JSON schema as a mandatory string format, the LLM feels pressured to provide that value. And if it doesn't find it in the text... well, it will just make something up. Suddenly, a plate number it considered "fitting" appears on a registry. That's pure structural hallucination.

How do you stop this narrative instinct? You mark those fields as nullable. If the model learns that it's perfectly fine to return a clean, honest null when it has no proof in the text, it saves you from a logical, decisional, or even financial disaster down the line. No fear of null. That's the beauty of having better control over the context.

The Ultimate Exercise: The first domain in practice

The thread also offers a practical shortcut. To internalize everything that matters for Agentic Architecture, try building a small agent equipped with:

- 3-4 functional MCP tools with flawless descriptions.

- Stop Reason Handling: The agent should programmatically justify why it stopped – did it finish a process, hit the maximum retry limit, or run out of tokens?

- A PostToolUse Hook: A simple automated step to normalize/process data formats immediately after an action completes, before the next reasoning round.

- A Policy Interception Hook: A guardian that reviews the returned or processed content and is ready to outright deny the operation if any ethical or security policy is violated.

It seems overwhelming at first, but these exact concepts are what separate some simple if-else blocks wrapping the OpenAI or Claude API from a true architecture you can scale alongside your clients.

Checklist for Non-Technical Roles: Vital questions for your new AI projects

Even if your role is more product or coordination-focused, this guide behind the Claude certification feels perfectly at home in a technical review or negotiation with a fresh team:

- How many direct actions (tools) can this agent use? The healthy answer is a maximum of 4-5. If they pitch you "The Supreme Agent with 24 ready-to-call tools," halt the process immediately.

- How do we ensure with these JSON schemas that the data isn't hallucinated? The right answer: We have validation and fallback logic, and we define where fields can be tagged with the "nullable" parameter when the AI is unsure.

- At what stage do we catch errors so the system doesn't perform a completely irreversible action? Don't move past the step where the technical team must implement interception policy hooks based on highly detailed rules.

Going back to the Anthropic certification, from everything we see publicly, it feels like a complete sanitization and maturation process for the industry. Fewer demos doing fancy tricks, more stability, clarity, and reliability grounded in a set of architectural rules and principles we can all apply.

Sources & Useful Links

- Original X (Twitter) Thread: @hooeem on Claude Architect

- Official Anthropic Website: anthropic.com